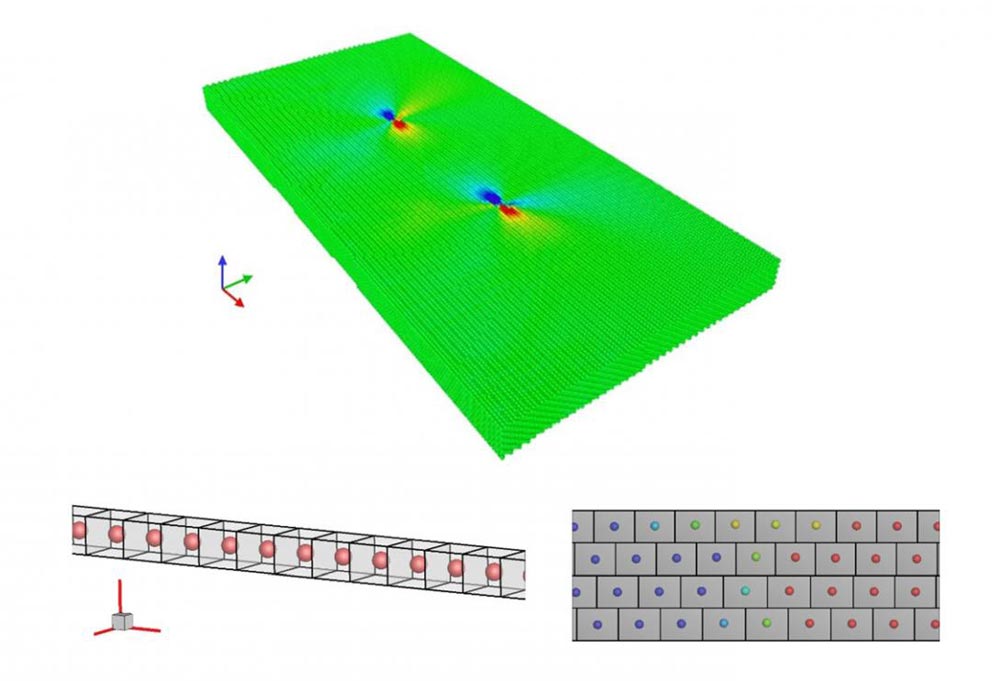

Supercomputer simulations show that at the atomic level, material stress doesn't behave symmetrically. Molecular model of a crystal containing a dissociated dislocation, atoms are encoded with the atomic shear strain. Below, snapshots of simulation results showing the relative positions of atoms in the rectangular prism elements; each element has dimensions 2.556 Å by 2.087 Å by 2.213 Å and has one atom at the center.

Credit: Liming Xiong

It's easy to take a lot for granted. Scientists do this when they study stress, the force per unit area on an object. Scientists handle stress mathematically by assuming it to have symmetry.

That means the components of stress are identical if you transform the stressed object with something like a turn or a flip. Supercomputer simulations show that at the atomic level, material stress doesn't behave symmetrically.

The findings could help scientists design new materials such as glass or metal that doesn't ice up.

That's according to a study published September of 2018 in the Proceedings of the Royal Society A. Study co-author Liming Xiong summarized the two main findings.

“The commonly accepted symmetric property of a stress tensor in classical continuum mechanics is based on certain assumptions, and they will not be valid when a material is resolved at an atomistic resolution.”

Xiong continued that “the widely used atomic Virial stress or Hardy stress formulae significantly underestimate the stress near a stress concentrator such as a dislocation core, a crack tip, or an interface, in a material under deformation.” Liming Xiong is an Assistant Professor in the Department of Aerospace Engineering at Iowa State University.

Xiong and colleagues treated stress in a different way than classical continuum mechanics, which assumes that a material is infinitely divisible such that the moment of momentum vanishes for the material point as its volume approaches zero.

Instead, they used the definition by mathematician A.L. Cauchy of stress as the force per unit area acting on three rectangular planes. With that, they conducted molecular dynamics simulations to measure the atomic-scale stress tensor of materials with inhomogeneities caused by dislocations, phase boundaries and holes.

The computational challenges, said Xiong, swell up to the limits of what's currently computable when one deals with atomic forces interacting inside a tiny fraction of the space of a raindrop.

“The degree of freedom that needs to be calculated will be huge, because even a micron-sized sample will contain billions of atoms. Billions of atomic pairs will require a huge amount of computation resource,” said Xiong.

What's more, added Xiong, is the lack of a well-established computer code that can be used for the local stress calculation at the atomic scale. His team used the open source LAMMPS Molecular Dynamics Simulator, incorporating the Lennard-Jones interatomic potential and modified through the parameters they worked out in the paper.

“Basically, we're trying to meet two challenges,” Xiong said. “One is to redefine stress at an atomic level. The other one is, if we have a well-defined stress quantity, can we use supercomputer resources to calculate it?”

Xiong was awarded supercomputer allocations on XSEDE, the Extreme Science and Engineering Discovery Environment, funded by the National Science Foundation.

That gave Xiong access to the Comet system at the San Diego Supercomputer Center; and Jetstream, a cloud environment supported by Indiana University, the University of Arizona, and the Texas Advanced Computing Center.

“Jetstream is a very suitable platform to develop a computer code, debug it, and test it,” Xiong said. “Jetstream is designed for small-scale calculations, not for large-scale ones. Once the code was developed and benchmarked, we ported it to the petascale Comet system to perform large-scale simulations using hundreds to thousands of processors. This is how we used XSEDE resources to perform this research,” Xiong explained.

The Jetstream system is a configurable large-scale computing resource that leverages both on-demand and persistent virtual machine technology to support a much wider array of software environments and services than current NSF resources can accommodate.

“The debugging of that code needed cloud monitoring and on-demand intelligence resource allocation,” Xiong recalled. “We needed to test it first, because that code was not available. Jetstream has a unique feature of cloud monitoring and on-demand intelligence resource allocation. These are the most important features for us to choose Jetstream to develop the code.”

“What impressed our research group most about Jetstream,” Xiong continued, “was the cloud monitoring. During the debugging stage of the code, we really need to monitor how the code is performing during the calculation. If the code is not fully developed, if it's not benchmarked yet, we don't know which part is having a problem. The cloud monitoring can tell us how the code is performing while it runs. This is very unique,” said Xiong.

The simulation work, said Xiong, helps scientists bridge the gap between the micro and the macro scales of reality, in a methodology called multiscale modeling. “Multiscale is trying to bridge the atomistic continuum. In order to develop a methodology for multiscale modeling, we need to have consistent definitions for each quantity at each level… This is very important for the establishment of a self-consistent concurrent atomistic-continuum computational tool. With that tool, we can predict the material performance, the qualities and the behaviors from the bottom up. By just considering the material as a collection of atoms, we can predict its behaviors. Stress is just a stepping stone. With that, we have the quantities to bridge the continuum,” Xiong said.

Xiong and his research group are working on several projects to apply their understanding of stress to design new materials with novel properties. “One of them is de-icing from the surfaces of materials,” Xiong explained. “A common phenomenon you can observe is ice that forms on a car window in cold weather. If you want to remove it, you need to apply a force on the ice. The force and energy required to remove that ice is related to the stress tensor definition and the interfaces between ice and the car window. Basically, the stress definition, if it's clear at a local scale, it will provide the main guidance to use in our daily life.”

Xiong sees great value in the computational side of science. “Supercomputing is a really powerful way to compute. Nowadays, people want to speed up the development of new materials. We want to fabricate and understand the material behavior before putting it into mass production. That will require a predictive simulation tool. That predictive simulation tool really considers materials as a collection of atoms. The degree of freedom associated with atoms will be huge. Even a micron-sized sample will contain billions of atoms. Only a supercomputer can help. This is very unique for supercomputing,” said Xiong.